Organizational agility is the ability to change direction, while operational efficiency is the ability to execute that direction with less waste. They are not opposites but two axes of the same performance system, and companies that treat them as a trade-off tend to underperform on both — while the ones that run both as measurable outcomes tend to outperform on both.

Most leadership conversations about agility and efficiency pose them as a choice: agility is expensive, the argument goes, while efficiency is restrictive, so the organization has to pick one depending on the market. This framing is the quiet reason most transformation programmes fail, because a performance system cannot be built on a false binary, and the companies that pulled ahead in the last five years did not pick between the two — they built operating rhythms that measured both.

What is operational agility, and how is it different from operational efficiency?

Understanding the two dimensions — and why conflating them produces underperformance on both.

Operational agility is how fast an organization can redirect resources, priorities, and decisions when conditions change, while operational efficiency is how much output the organization produces per unit of input — time, money, headcount, attention. Agility governs direction, and efficiency governs throughput, which is why the two capabilities answer different questions and require different instrumentation to manage.

The confusion happens because both words describe “doing things better,” and executives often use them interchangeably in board decks even though they are not interchangeable. A factory running at 98% capacity utilisation is highly efficient, but if the market shifts and that factory is producing the wrong product, efficiency becomes a liability rather than an advantage, because the organization is getting very good at producing output nobody wants to buy.

The inverse is equally dangerous. A team that pivots every three weeks in response to new signals looks agile, but if each pivot introduces rework, scrap, and context-switching, the organization is burning capital on motion that produces no output, turning agility into expensive improvisation rather than genuine adaptability.

For a deeper definitional grounding in what agility means at the company level, our article on what is organizational agility covers the operating capabilities that separate it from agile methodology and team-level speed.

Why do most companies treat agility and efficiency as a trade-off?

The structural reason the false binary persists — and why measurement debt is the real culprit.

The trade-off myth comes from an older operating model. Twentieth-century manufacturing optimised for efficiency because demand was predictable and capital was expensive, and in that world agility meant idle capacity, which was a cost the business actively tried to minimise. The playbook reversed in the early 2010s when startups proved that speed of iteration could beat scale economics, and agility became the new orthodoxy while efficiency was dismissed as legacy thinking.

Both views are half-right and fully outdated, because the companies doing well today are neither the fastest nor the leanest — they are the ones that measure both dimensions in parallel and make trade-off decisions with the full picture in front of them rather than with half the instrumentation missing.

What keeps the trade-off alive is measurement debt. Most companies have mature systems for tracking efficiency — ERP, financial planning, utilisation dashboards — but very few have equivalent systems for tracking agility, so efficiency gets reported monthly in structured dashboards while agility gets discussed qualitatively in offsites. The dimension that is instrumented gets managed, and the dimension that only gets discussed gets forgotten, which is the structural reason the trade-off persists in most operating conversations: not because it is real, but because one side of the equation is measurable and the other is not.

A broader walkthrough of the capability model that resolves this measurement gap is available in our complete guide to organizational agility, which covers the four operating dimensions companies need to instrument together.

What are operational agility examples from companies that get it right?

Three patterns that show up consistently in organizations running both dimensions well.

Three patterns show up consistently across companies that balance both dimensions.

Pattern one: short planning cycles on long horizons. The most durable operating models set a three-to-five-year customer outcome at the top and replan the path to it every quarter, keeping the destination stable while the route changes. This is the operating logic that lets the same portfolio house experimental bets alongside scaled operations, because the long horizon provides the efficiency anchor while the short cycle provides the agility reset.

Pattern two: efficiency metrics measured at the portfolio level, not the project level. The companies that scale without losing agility do not ask every team to maximise efficiency — they ask the portfolio to. Some teams are deliberately inefficient because they are exploring, experimenting, and failing, while others are highly efficient because they are scaling proven bets, and the portfolio balance between exploration and exploitation is the metric leadership tracks rather than individual team productivity. This is why value stream management has become the dominant model for companies running both dimensions in parallel.

Pattern three: outcome-based goals paired with operational key results. This is where OKRs enter the conversation, because a well-written objective sets an ambitious, directional change that is the agility vector, while well-written key results measure the specific, efficient mechanisms by which that change is delivered, so the structure of the framework itself resolves the tension rather than forcing the leadership team to choose between the two.

Why does the agility vs efficiency debate break down at scale?

How the coordination cost of agility grows non-linearly — and what that means for operating design.

At small scale, the organization can choose. A ten-person startup can pivot weekly and still ship product because the coordination overhead is low, but a hundred-person business unit cannot do the same thing because at scale every pivot costs — roadmaps have to be rewritten, dependencies have to be renegotiated, and hiring plans have to be revised, with the cost of agility growing non-linearly as headcount rises.

This is where most companies fail. They scale from 50 to 500 people while still operating the agility playbook that worked at 50, so every team pivots independently and the result is not agility but chaos dressed up as agility. Priorities contradict each other across functions, the sales team chases the old strategy while the product team builds for the new one, and the organization ends up producing more output while producing fewer outcomes.

The counter-failure is equally common. Companies scaling from 500 to 5,000 overcorrect into process discipline — gate reviews, steering committees, annual planning cycles — and while efficiency metrics look good on the dashboards, agility quietly collapses underneath the structure. When the market shifts, these organizations take 18 months to respond, and they often do not notice the lag because their reporting systems are all showing green.

The resolution is architectural. The organization needs a goal system that holds direction constant for long enough to execute efficiently while allowing the specific key results underneath to change as evidence arrives, and quarterly objectives with monthly key result check-ins deliver exactly this balance — the discipline that adaptive planning frameworks formalise across operating teams. The objective carries the agility commitment while the key results carry the efficiency measurement, and when an organization outgrows its operating rhythm it is usually because one of those two layers has collapsed into the other.

See how quarterly OKRs balance direction changes with measurement discipline at scale

How do OKRs resolve the agility-efficiency tension?

The structural reason OKRs work where other goal frameworks fall short of resolving both dimensions.

OKRs are the only goal framework that explicitly measures both dimensions in the same artifact. The objective sets the directional change, which is the agility layer, and the key results define the measurable mechanisms, which is the efficiency layer, so running them together resolves the tension by design rather than by management intervention.

A typical pattern looks like this. The objective — “Become the preferred onboarding experience for mid-market SaaS buyers” — is directional and can be rewritten the following quarter if the market shifts. The key results — “Reduce time-to-first-value from 14 days to 5,” “Increase onboarding completion from 62% to 85%,” and “Reduce customer support tickets in first 30 days by 40%” — are efficiency measures that tell the organization whether the direction is being executed with discipline or just being talked about.

If the market shifts mid-quarter, the objective can be rewritten for the next cycle and the key results are replaced, but what persists is the operating rhythm — the quarterly cadence, the weekly check-in, the measurement discipline — with the rhythm carrying the efficiency while the content carries the agility. The two layers move at different speeds on purpose, and the separation is what keeps the system stable as it scales.

Most growing companies try to build this two-layer system on tools designed for one layer. Standalone goal-tracking platforms handle the agility side but carry no portfolio efficiency view. Project management platforms handle the efficiency side but have no structured objective layer above the work. Running both dimensions in separate tools means running them at separate cadences — which is the failure mode this article is about.

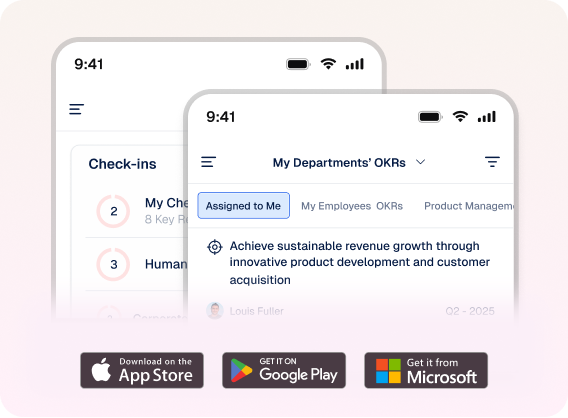

This is the architecture that Profit.co’s OKR management platform is built around. The platform tracks both axes natively, with ambitious objectives driving the agility dimension and key result progress, cycle time, and portfolio efficiency metrics driving the efficiency dimension. The 16 AI Agents, native PPM module, and 100+ integrations exist specifically to keep both dimensions measurable as the organization grows past the 500-person threshold where most goal systems start to break down under their own weight.

What is the playbook for running agility and efficiency together?

Four practical moves that separate organizations that instrument both from those that only discuss both.

Four practical moves separate organizations that run both dimensions from organizations that only talk about both.

Set direction quarterly, not annually. Annual plans are too slow to be agile and weekly plans are too fast to be efficient, which makes the quarter the natural unit: three months is long enough to execute a meaningful change and short enough to correct course four times a year without the strategic whiplash that kills team focus.

Measure agility at the portfolio level. The leadership team should not ask every team to be agile but should instead ask the portfolio to. Some teams should be running exploitation bets that are efficient, predictable, and scaling, while others should be running exploration bets that are agile, uncertain, and learning, and the mix between the two is the metric that captures organizational agility rather than any individual team’s speed.

Separate objectives from key results, and let them move at different speeds. Objectives should change quarterly or less often while key results should update weekly, because if the organization is changing objectives weekly it does not have an operating system but a state of anxiety, and if it is changing key results quarterly it does not have feedback but only reporting.

Instrument both dimensions. Measurement is not a consequence of operating discipline but the cause of it, which is why companies that instrument only one dimension tend to be systematically worse at both over time. The dashboards an organization builds are the behaviours it ends up with, and that feedback loop is the real mechanism behind every durable operating transformation.

The financial case becomes straightforward once both dimensions are instrumented. Teams that want to quantify the gap before investing in a new operating rhythm can model it directly through the OKR ROI Calculator, which isolates the efficiency lift from a well-run OKR programme against current baseline performance.

The pattern that runs through all four moves is the same: agility and efficiency are not choices but co-dependent measurements of the same system, and the organizations that internalise this stop picking between them and start instrumenting both, letting the gap between the two dimensions drive the learning that improves them both over time.

Instrument agility and efficiency as paired metrics and ensure your organization outperforms on both dimensions

Frequently asked questions

Operational agility is the measurable ability of an organization to redirect resources, priorities, and decisions in response to changing conditions. It is tracked through cycle time, pivot frequency, and decision-to-execution lag rather than through subjective assessments in leadership meetings.

Organizational agility measures how quickly an organization changes direction; operational efficiency measures output per unit of input. Agility governs direction, efficiency governs throughput. Both must be instrumented, because measuring only one produces systematic underperformance on the other.

Yes. The two capabilities are co-dependent rather than opposed, and outcome-based goal frameworks like OKRs are the structural mechanism that holds both measurable in parallel, which is how growing companies sustain both direction changes and operating discipline simultaneously.

Quarterly replanning against multi-year customer outcomes is one pattern. Measuring agility at the portfolio level rather than per-team is another. Both hold direction stable at the top while letting the execution path underneath change as evidence arrives through the cycle.

Most organizations have mature efficiency measurement (ERP, utilisation dashboards, financial reporting) but few have equivalent agility instrumentation. The imbalance skews management attention toward efficiency, which is why the trade-off feels real even when the underlying system isn’t forcing a choice.