5.1 The Critical Role of the OKR Champion

Every successful government OKR implementation we have studied has had a dedicated OKR Champion — a senior leader (typically Director or Deputy Director level) with genuine authority, credibility with both political and career leadership, and the time to actively manage the program through its first year. This person is not an administrator; they are a culture carrier. Their job is to model good OKR behavior, coach colleagues through the transition, resolve conflicts between competing objectives, and maintain momentum through the inevitable organizational resistance.

The OKR Champion role should be formally designated, with protected time (typically 20-30% of a senior staff member’s capacity in Phase 1-2, declining to 10% in Phase 3-4 as the culture develops). Naming an OKR Champion as an afterthought — someone who does it alongside a full workload — is one of the most reliable predictors of implementation failure.

5.2 Right-Sizing the OKR Hierarchy

One of the most common mistakes in government OKR implementation is creating too many levels of the OKR hierarchy too quickly. A large federal agency might be tempted to create OKRs at the Secretary level, Deputy Secretary level, Assistant Secretary level, program office level, division level, team level, and individual level simultaneously. This creates an unmanageable system that consumes enormous amounts of management time and produces little value.

ABSTRACT

The Objectives and Key Results (OKR) framework has transformed strategy execution at some of the world’s most complex organizations — from Google and Intel to healthcare systems and universities. Its adoption in government is accelerating, driven by the demand for greater accountability, the limitations of traditional performance management systems, and the availability of AI-native platforms designed for the public sector.

But OKRs in government require meaningful adaptation. The institutional context of government — its budget cycles, civil service structures, multiple principals, statutory mandates, and political accountability pressures — is sufficiently different from the corporate context in which OKR methodology was developed that a naive transplant fails.

This article is a comprehensive, field-tested implementation guide for federal and SLED agencies. It covers: the anatomy of a well-formed government OKR, the critical differences between OKRs and KPIs, common failure modes and how to avoid them, the six government-specific adaptations required for success, a four-phase implementation roadmap, the OKR management cadence, scoring methodology, and how Profit.co’s government platform supports each stage of the implementation lifecycle.

- 55+ Years of OKR History From Intel 1968 to government today

- 83% Better Mission Outcomes Agencies with full OKR cascade vs. plan-only

- 6 Gov-Specific Adaptations Required for OKR success in public sector

1. The Origin and Logic of OKRs

Where the methodology came from, why it works, and what makes it uniquely suited to the public sector’s accountability challenges.

The OKR methodology was invented by Andy Grove at Intel in the late 1960s, adapted from Peter Drucker’s Management by Objectives (MBO) framework. Grove’s insight was simple but powerful: organizations become aligned and effective when every person, at every level, can answer two questions clearly — “What am I trying to achieve?” (the Objective) and “How will I know if I’ve achieved it?” (the Key Results).

John Doerr brought OKRs to Google in 1999, where they became central to the company’s management culture as it scaled from 40 to 100,000 employees. From there, OKRs spread to LinkedIn, Twitter, Uber, Airbnb, and hundreds of other organizations — and more recently into healthcare systems, universities, nonprofits, and government agencies that recognized in the framework a solution to their own execution challenges.

The core logic is deceptively simple. An Objective is a qualitative, inspiring statement of what you want to achieve — ambitious enough to require significant effort, specific enough to be meaningful. Key Results are the two to five quantitative measures that define what success looks like — specific, time-bound, and owned by a named individual. Initiatives are the projects and tasks that the team will undertake to move the Key Results. The framework creates a clear hierarchy: the Objective tells you where to go, the Key Results tell you how you’ll know when you’ve arrived, and the Initiatives describe how you’ll get there.

For government agencies struggling with the Execution Gap described in Article 2, OKRs provide the missing connective tissue: a management architecture that cascades from the highest-level mandate to the individual contributor’s daily work, creating visibility, accountability, and alignment at every level of the organization.

2. Anatomy of a Well-Formed Government OKR

What a strong Objective looks like, what makes a Key Result defensible, and how the two work together to create accountability.

The single biggest implementation failure in government OKR programs is writing weak OKRs. Vague Objectives that could mean anything to anyone. Activity-based Key Results that measure outputs rather than outcomes. Sandbagged targets designed to guarantee 100% achievement. Each of these failure modes destroys the value of the OKR framework and, worse, creates a false sense of performance accountability.

The diagram below illustrates a well-formed government OKR from the VA system, showing the relationship between the Objective, Key Results, and supporting Initiatives.

2.1 What Makes a Strong Objective

- Qualitative and inspiring — it should articulate a meaningful change in mission profit, not just describe activity

- Time-bound — scoped to a fiscal year or quarter, not open-ended

- Ambitious but achievable — uncomfortable but not impossible given available resources

- In the agency’s language — connected to the mission statement and existing strategic priorities

- A single clear outcome — avoid compound objectives that bundle multiple goals into one

2.2 What Makes a Strong Key Result

- Outcome-oriented — measures a change in the world, not a completed activity

- Quantitative — includes a specific numeric target, not qualitative language

- Time-bound — specifies the date by which the target will be achieved

- Owned — assigned to a single named individual who is accountable for progress

- Baseline-referenced — specifies the current state to make progress measurable

- Stretch but credible — aggressive enough to require effort, realistic enough to be achievable

| Common Failure Mode | Weak OKR Example | Strong OKR Example |

|---|---|---|

| Vague objective | ❌ “Improve our communications” | ✅ “Become the most trusted source of program updates for our beneficiary community by Q4” |

| Activity KR (not outcome) | ❌ “Complete 12 stakeholder briefings by Q3” | ✅ “Increase stakeholder confidence rating from 58% to 80% by Q3” |

| Unmeasurable KR | ❌ “Enhance digital capabilities across the division” | ✅ “Reduce average transaction processing time from 14 days to 3 days by Q4” |

| Output KR (not outcome) | ❌ “Publish updated program guidelines by June 30” | ✅ “Reduce beneficiary errors on program applications by 40% following guideline update” |

| KR with no owner | ❌ “Improve interagency data sharing (cross-agency effort)” | ✅ “[Name], Director of Data: Establish live API connection with partner agency by Q2” |

| Sandbagged KR | ❌ “Maintain current service delivery levels (baseline = current performance)” | ✅ “Increase service delivery throughput by 25% without additional FTE headcount” |

Figure 2: OKR Quality — Common Failure Modes with Weak and Strong Examples from Government Contexts

3. OKRs vs. KPIs: Understanding the Critical Distinction

One of the most common sources of government OKR confusion — and how to resolve it cleanly.

When government performance professionals first encounter OKRs, their most common reaction is: “We already have performance measures. How are OKRs different from our existing KPIs?” This is exactly the right question, and the answer is important enough to get precisely right.

KPIs (Key Performance Indicators) and OKRs serve different functions in a performance management system. They are complementary, not competing. Understanding the distinction is essential for designing a government OKR program that adds value without creating confusion or duplication.

In practical terms: a government agency’s existing dashboard of performance metrics — the measures it reports to OMB, Congress, and the public under GPRA-M — are primarily KPIs. They measure the ongoing operational health of the agency’s programs. OKRs sit above this layer, using the same underlying data but asking a different question: not “How is the program performing?” but “What specific ambitious outcomes are we committed to achieving this quarter, and who owns each one?”

Profit.co’s government platform is designed to accommodate both layers simultaneously. KPI data feeds can be configured as automated inputs to Key Result progress calculations, so that the operational metrics agencies are already collecting automatically update OKR progress without additional data entry burden.

4. Six Government-Specific Adaptations

The standard OKR methodology requires meaningful modification to work in the institutional context of government. Here are the six essential adaptations.

The OKR methodology was developed in the private sector and optimized for organizations with relatively clear profit objectives, flexible resource allocation, and short feedback loops. Government agencies operate in a fundamentally different institutional environment, and a naive transplant of corporate OKR practices will fail. The following six adaptations, developed through Profit.co’s implementation experience with government clients, are essential for success.

| Government-Specific Challenge | The Tension | Recommended Adaptation |

|---|---|---|

| Stretch Goals vs. Budget Accountability | Standard OKR practice sets aspirational targets where 70% achievement = success. This conflicts with government budget accountability where 100% obligation rates are expected. | Use two-tier targets in Profit.co: a Committed Target (100% expected; used for budget/reporting purposes) and a Stretch Target (the ambitious OKR goal). Clearly label both in the platform. |

| Annual Budget Cycle vs. Quarterly OKR Cadence | Government budget cycles are annual; OKR methodology runs quarterly. Resource allocation cannot be adjusted within the year even if OKRs show a need. | Set annual Objectives with quarterly Key Results. Use the quarterly OKR review to document resource constraints and build the evidence base for next year’s budget request. |

| Multiple Principals Problem | Federal agencies serve Congress, OMB, the White House, and the public simultaneously. Conflicting mandates make a single Objective hierarchy difficult. | Create a multi-stakeholder alignment map in Profit.co showing how each OKR connects to the relevant principal’s priorities. Use the hierarchy to surface and resolve conflicts explicitly. |

| Civil Service Performance Constraints | Union agreements and due process requirements constrain how OKR achievement data can be used in personnel decisions. | Design OKRs as a positive accountability tool, not a punitive one. Connect OKR data to performance recognition first; use it to support — not replace — formal appraisal processes. |

| Congressional Mandates as Constraints | Statutory requirements and congressional directives can override strategic priorities mid-year, disrupting OKR plans. | Build a ‘Mandate’ OKR category in Profit.co for compliance-driven goals that sit alongside strategic OKRs. Track both transparently; escalate conflicts to leadership immediately. |

| Multi-year Programs vs. Annual OKRs | Many government programs operate on 3-5 year timelines; forcing them into quarterly OKR cycles creates artificial milestones. | Use annual Objectives with multi-year Key Result trajectories. Profit.co supports multi-quarter KR tracking with intermediate milestone markers at each quarterly checkpoint. |

Figure 4: Six Government-Specific OKR Adaptations — the tensions and recommended solutions

5. The Four-Phase Implementation Roadmap

A field-tested sequence for launching and sustaining an OKR program in a government agency.

OKR implementation in government typically fails for one of three reasons: insufficient leadership sponsorship, premature cascade (trying to go from agency to individual in a single quarter), or over-engineering the system (too many OKRs, too many levels, too much complexity before the culture has developed). The four-phase roadmap below is designed to avoid all three failure modes.

| Phase | Focus | Key Activities |

|---|---|---|

| Phase 1 | Foundation(Weeks 1–4) |

|

| Phase 2 | Cascade(Weeks 5–10) |

|

| Phase 3 | Individual Alignment(Weeks 11–16) |

|

| Phase 4 | Optimization(Quarter 2+) |

|

Figure 5: Four-Phase OKR Implementation Roadmap for Government Agencies

Best practice is to launch with no more than three levels in the first year: Agency/Department, Program/Division, and Individual. Additional levels can be added in Year 2 once the culture and tooling are established. Profit.co’s alignment tree feature makes it easy to add levels incrementally without disrupting the existing structure.

5.3 Quantity Discipline

The most common OKR quality problem in government is having too many objectives. Teams attempt to capture every priority, initiative, and mandate in the OKR system, resulting in 15-20 objectives per department, each with 5 Key Results. This creates an unwieldy system where nothing is truly prioritized and the weekly check-in burden is unsustainable.

The discipline of OKR is the discipline of prioritization. A department should have no more than 3-5 Objectives per quarter, each with 2-5 Key Results. If a priority cannot be accommodated within this constraint, the organization has not finished the prioritization conversation. Forcing that conversation is itself a major value of the OKR framework.

6. The OKR Management Cadence

The rhythm of check-ins, reviews, and resets that keeps OKRs alive throughout the execution year.

An OKR program that sets goals in October and reviews them in September is not a management system — it is a compliance exercise. The power of OKRs comes from the regular management cadence that keeps them alive, connected to operational decisions, and responsive to changing conditions. The cadence has four time scales, each serving a distinct function.

| Cadence | Audience | Purpose & Agenda | Platform Support |

|---|---|---|---|

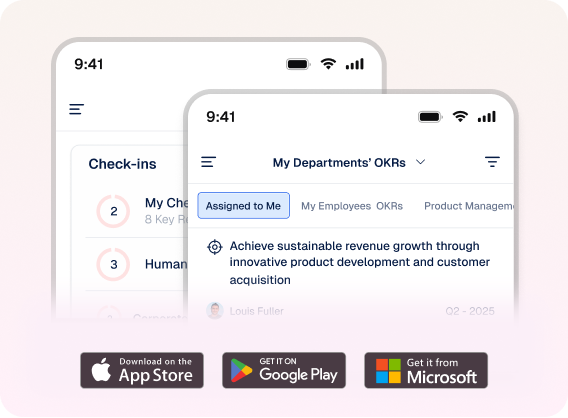

| Weekly | Individual & Team | Check-in updates on Key Results (5-10 min via Profit.co mobile or web). Flag blockers. Update progress percentages. | Automated by Profit.co check-in reminders; AI summarizes for manager visibility |

| Monthly | Department/Program | Review OKR progress dashboard. Address at-risk Key Results. Reallocate resources if needed. Celebrate wins. | 45-60 min meeting; live Profit.co dashboard drives agenda; AI Progress Agent prepares summary |

| Quarterly | Agency Leadership | Full OKR review and reset. Score completed cycle (0.0–1.0). Retrospective on what worked/didn’t. Set next quarter OKRs. | Half-day offsite or full leadership session; Profit.co generates performance narratives; new OKRs entered |

| Annual | Executive / Congress | Annual performance report. Strategic plan alignment check. Budget justification tied to OKR outcomes. GAO/IG readiness. | Profit.co generates annual mission profit summary; connects to GPRA-M reporting requirements |

Figure 6: Government OKR Management Cadence — four time scales, audiences, purposes, and Profit.co platform support

6.1 The Weekly Check-In: Foundation of the Cadence

The weekly check-in is the most important practice in sustaining an OKR program. It is also the most frequently skipped. When teams stop doing weekly check-ins, OKR progress data becomes stale, at-risk objectives are not surfaced in time for intervention, and the sense of accountability that OKRs create quickly dissipates.

Profit.co’s automated check-in reminders, mobile app, and AI Progress Agents are specifically designed to make the weekly check-in as frictionless as possible. A check-in takes 5-10 minutes per Key Result: update the progress percentage, note any blockers, and optionally add a comment about what drove the change. The AI Progress Agent then summarizes these updates into a management-ready narrative for the next monthly review, eliminating the reporting burden that traditionally makes performance management a tax rather than a tool.

6.2 The Quarterly Review and Reset

The quarterly OKR review is the most strategically significant event in the OKR calendar. It serves three functions simultaneously: it provides a retrospective on the quarter just completed, an assessment of the agency’s mission profit trajectory, and a planning session for the next quarter’s priorities.

The review begins with scoring: each Key Result is scored on a 0.0-1.0 scale based on what percentage of the target was achieved. The scores are then reviewed in the context of the Objective: did the agency make meaningful progress on what it was trying to achieve? The review concludes with a planning session for the next cycle, informed by what was learned.

A common question from government leaders is: what happens to unfinished OKRs? The answer depends on the reason. If a Key Result is at 0.7 and the goal is still relevant, it carries forward into the next quarter. If it is at 0.2 and the fundamental approach needs to change, it is redesigned. If the underlying Objective is no longer a priority — because of a policy shift, a budget change, or new leadership direction — it is closed and a new Objective is set.

7. OKR Scoring: The 0.0 to 1.0 Scale

How to score OKRs fairly, what the scores mean, and why the government impulse to aim for 1.0 is counterproductive.

| Score | Rating | What It Means & What to Do |

|---|---|---|

| 0.0 – 0.3 | Below Expectations | Fundamental obstacles prevented progress. Major rethink required — strategy, resources, or both. |

| 0.4 – 0.6 | Partial Progress | Meaningful progress made but target not achieved. Analyze root causes. Retain or modify for next cycle. |

| 0.7 – 0.9 | Strong Progress | The OKR sweet spot. Ambitious target achieved at a challenging level. Set a harder target next cycle. |

| 1.0 | Full Achievement | Target fully met. Ask: was it ambitious enough? A consistent 1.0 suggests the target was too conservative. |

Figure 7: OKR Scoring Scale — what each score range means and the appropriate management response

The government instinct is to score everything at 1.0. In a culture where 100% obligation rates and full compliance are the norm, anything less than complete achievement feels like failure. This instinct is one of the most important cultural shifts that OKR implementation requires in government.

The OKR scoring philosophy inverts the typical government performance expectation. A consistent 0.7-0.9 score indicates that targets were ambitious and teams are performing at a high level. A consistent 1.0 indicates that targets were not ambitious enough and should be reset higher. And a 0.0-0.3 score indicates not failure of effort but failure of strategy — something fundamental about the approach needs to change.

Profit.co’s confidence score feature allows teams to signal trajectory before the end of the quarter — flagging early when a Key Result is at risk so that leadership can intervene before the score is fixed. This is the leading indicator function that transforms OKR scoring from a retrospective report card into a real-time management tool.

8. Common Failure Modes and How to Avoid Them

A field guide to the most frequent ways government OKR programs go wrong — and the specific countermeasures.

8.1 Leadership Abandonment

The most common and most fatal failure mode is senior leadership publicly endorsing OKRs and then not practicing them. When the Secretary or Director stops attending OKR reviews, stops referencing OKRs in leadership meetings, and stops connecting resource allocation decisions to OKR progress, the signal to the organization is clear: this is a compliance exercise, not a management system. OKR programs can survive this for one or two quarters before they collapse into paperwork.

Countermeasure: The OKR Champion must have a direct line to the agency head and standing permission to escalate leadership disengagement. Monthly leadership OKR reviews should be protected on the calendar as inviolable. The agency head’s own OKRs should be visible to all staff in the platform — demonstrating accountability from the top.

8.2 Initiative Creep

Teams that are unaccustomed to outcome-based thinking naturally drift from Key Results (outcome measures) to Initiatives (activity lists). Over time, the OKR system becomes a to-do list rather than an accountability structure, and the connection to mission profit evaporates.

Countermeasure: Profit.co’s AI Quality Agent flags Key Results that appear to be activity-based rather than outcome-based, prompting teams to revise them. OKR Champions should conduct quarterly quality audits, and the test is simple: “If we completed this Key Result but the underlying mission objective wasn’t achieved, would we consider ourselves successful?” If yes, the KR is measuring activities, not outcomes.

8.3 Siloed OKRs

Departments that set OKRs without reference to agency-level objectives — or to each other — produce local optimization at the expense of mission-level performance. Two departments can both score 0.9 on their respective OKRs while the agency’s cross-cutting priorities go unaddressed.

Countermeasure: The Profit.co alignment tree makes dependency and overlap visible before OKRs are finalized. Mandate an alignment review at the start of each cycle where department heads review each other’s draft OKRs and identify interdependencies. Assign shared Key Results to cross-cutting objectives where coordination is required.

8.4 OKR Proliferation

As OKR programs mature, there is a natural tendency to add more objectives, more Key Results, more levels of the hierarchy, and more reporting requirements. Within two years, a well-intentioned OKR program can become more burdensome than the system it replaced.

Countermeasure: Enforce hard limits. No department has more than 5 Objectives. No Objective has more than 5 Key Results. No level of the hierarchy is added without retiring another. The OKR Champion’s job includes actively pruning the system, not just growing it.

9. Conclusion: The Government OKR Opportunity

The OKR framework, thoughtfully adapted for the institutional context of government, represents one of the most powerful management tools available to public sector leaders seeking to close the Execution Gap. It provides the vocabulary of accountability, the architecture of alignment, and the management cadence that connects strategic vision to daily operational reality.

The agencies that have successfully implemented OKRs in government — and there is a growing cohort of them, from county health departments to federal program offices — share a set of common characteristics: genuine leadership commitment, an empowered OKR Champion, a willingness to prioritize ruthlessly, and a platform that makes the mechanics of OKR management effortless rather than burdensome.

Profit.co for Government is designed specifically for this context. Its AI-native OKR authoring, cascading alignment tree, automated check-in infrastructure, and mission profit dashboards provide the technical foundation for government OKR success. But the platform is a tool, not a solution. The solution is the management culture that the tool supports — and that culture begins with a leadership team that is willing to be held accountable, publicly, to ambitious goals.

That willingness is the defining characteristic of the highest-performing government agencies. And it is available to every agency willing to make it.