TL;DR

Agentic AI in Project Portfolio Management doesn’t replace your judgment. It does the analytical heavy lifting, so you walk into every portfolio review with evidence, not just data. But only if your data governance is already solid.

A familiar scenario plays out in most organizations. About 45 minutes into a quarterly portfolio review, everyone is looking at the same dashboard. There are seventeen slides, numbers are populated, variance charts are color-coded, and a summary deck has been carefully assembled over the weekend. The room is not lacking information.

Then the CFO asks a different kind of question.

Not, “Are we investing in the right things?” But, “Given everything we know right now, what should we change?”

This is where traditional portfolio reviews break down. The data is visible, but the insight is not. The organization can report on performance, but it cannot translate that performance into clear, defensible decisions.

What follows is hesitation. People move between slides. Someone tries to interpret a chart. Eventually, a response comes in the form of a follow up rather than an answer.

This moment is common across enterprise portfolio reviews. The issue is not the absence of data. The issue is the absence of inference. The organization can see what is happening, but it cannot confidently explain what it means or what should change.

This is the gap that agentic AI in project management is designed to address. It does not replace the people in the room. It changes the nature of the conversation. Instead of spending days preparing reports that still do not answer the core question, teams gain the ability to synthesize data into insight and connect performance to decision making in real time.

“Artificial intelligence will have a more profound impact on humanity than fire, electricity and the internet. ”

What “Agentic” Actually Means

The word is everywhere right now. And like most trending terms in enterprise software, it’s being stretched well past its original meaning.

Agentic AI, properly defined, is a system that can pursue a goal across multiple steps, make decisions along the way, and take action without requiring human approval for every micro-step. It’s not a smarter chatbot. It’s not a threshold alert with a new coat of paint.

According to Gartner, by 2028, 33% of enterprise software applications will include agentic AI, up from less than 1% in 2024. That’s a fast-moving curve. And the PPM market is right in the middle of it.

But here’s the problem Gartner also flagged: over 40% of agentic AI projects are predicted to be cancelled by the end of 2027 due to unclear business value and inadequate risk controls.

The failure pattern has a name. Gartner calls it agent washing. Rule-based workflow triggers and conditional alerts are being relabeled as AI-driven decisions. The outputs look smart. The logic underneath is still a formula someone wrote in 2019.

If your PM tool flips a status field to red when a date passes, that’s not AI inference. That’s a lookup function.

The Three Questions No Dashboard Was Built to Answer

A Gartner SPM analyst recently reviewed the enterprise PPM market and landed on a precise observation. The data infrastructure is there. The strategy-to-portfolio traceability is there. What’s missing is the layer between data and decision.

Specifically, the ability to answer three questions that matter most to every portfolio leader:

- Are we still investing in the right direction?

- Are we managing the budget well?

- Are we on track to deliver the benefits?

These are not reporting questions. No dashboard answers them. They require a system to look at everything it knows, compare current trajectory against original commitments, detect drift, and surface a signal with supporting evidence.

That’s inference. And it’s the gap most enterprise PPM tools haven’t closed.

The research backs this up. PMI found that 82% of senior leaders expect AI to have significant impact on project delivery. But only 21% of organizations currently leverage AI effectively in their project work. The gap isn’t reluctance. It’s a capability mismatch. Teams get more dashboards. The decisions that drive portfolio outcomes stay entirely human-dependent.

See how Profit.co answers the three questions that matter most

Who Should Actually Be Making What Decisions

Before you deploy any agentic capability, one design question has to be answered clearly: at what level does the system act on its own, at what level does it recommend and wait, and at what level does it always defer? This is the governance architecture that separates responsible AI deployment from the washed variety.

Here’s how it maps in a portfolio context:

| Decision Level | Governing Principle | PPM Examples | What AI Does | Human Role |

|---|---|---|---|---|

| Level A: AI Acts | High-frequency, low-stakes, fully reversible | Auto-tag demand by OKR; trigger milestone alert; generate draft risk entry | Acts and logs. Instant human override available. | Monitor, override if needed |

| Level B: AI Recommends | Medium-stakes, partially reversible, context-dependent | Investment alignment drift; budget trajectory warning; benefits forecast divergence | Generates recommendation with evidence chain and confidence level | Approves, overrides, or escalates with documented rationale |

| Level C: AI Informs | High-stakes, low reversibility, board-level accountability | Cancel a funded programme; reallocate capital above materiality threshold; change strategic rationale | Surfaces data and scenario analysis. Never frames output as a decision. | Decides with full authority. AI is an evidence source only. |

The three big portfolio questions all sit at Level B. Not low-stakes enough to automate. Not high-stakes enough to treat AI as a passive observer. Exactly the space where inference adds the most value.

The governing principle worth putting somewhere visible: AI authority should be calibrated to decision reversibility, not to what the AI is technically capable of doing.

The Data Problem That is Real

Here’s the part most vendor demos skip. Agentic AI in Project Portfolio Management is only as trustworthy as the data governance underneath it. And in most enterprise portfolios, that governance has at least one of three gaps:

- OKR linkage mapped retrospectively. If a programme’s connection to a strategic objective was annotated six months after approval, the alignment score is built on assumption. The inference will look real. It won’t be.

- No structural separation between approved variance and execution variance. A $6M portfolio variance could be $5.4M of signed, governed scope decisions and $0.6M of genuine overrun. Or the reverse. If the system can’t tell the difference, the budget AI can’t either.

- Benefit commitments live in PDF business cases. Not in structured system records. Not linked to investment approvals. The AI has nothing to forecast against except the current tracking data, which isn’t the same thing as the original commitment.

Each of these gaps doesn’t just weaken the AI output. It makes confident AI wrong answers possible at portfolio scale. That’s worse than no AI at all.

The AI Readiness Checklist

Before activating any portfolio AI inference capability, check these eight prerequisites:

- [ ] OKR linkage established at demand intake, not mapped after programme approval

- [ ] Benefits commitment recorded as structured data, linked to investment approval event

- [ ] Governed baseline management in place with approved variance separated from execution variance

- [ ] Investment tier classification active (strategic, run-the-business, compliance, enabler)

- [ ] Role-based authority thresholds defined for each decision type .AI RACI defined for each decision type (Responsible = AI draft/recommendation, Accountable = human decision owner, Consulted = domain reviewers, Informed = stakeholder visibility)

- [ ] Decision boundary architecture documented (Level A / B / C mapping complete)

- [ ] AI recommendation explainability standard defined (any output can be explained to the board without calling the vendor)

- [ ] Override and escalation path governed and recorded in the system of record

Score 8 of 8? Your data foundation supports meaningful AI inference now. Score below 5? The AI will amplify the governance gaps, not work around them. Fix the governance first.

What This Actually Looks Like in Practice

Imagine a portfolio leader at a mid-size financial services firm. Their PMO uses a platform with an investment-alignment module integrated into their quarterly OKR refresh.

In Q2, the strategy team deprioritizes two corporate objectives related to legacy infrastructure modernization. The AI compares the current portfolio’s OKR weight distribution against the updated priorities and signals that three of the top five funded programs by investment value are now serving deprioritized objectives. Combined exposure: $4.2M.

The PMO Director gets a recommendation, not an alert. The recommendation includes the evidence chain, the current delivery stage of each program, and two options: formally review them against the revised strategy or document the continued investment as a deliberate governance decision. The AI made no decision. The AI made inaction impossible to miss. That’s the standard to aim for.

Ready to experience Profit.co’s Strategic Portfolio Management platform?

Agentic AI in project management refers to AI systems that can pursue multi-step goals, make intermediate decisions, and surface recommendations without requiring human input at every stage. In PPM, this means detecting portfolio drift, flagging budget anomalies, and forecasting benefit realization gaps, then routing those signals to the right decision-maker.

It depends on the decision type. Low-stakes, reversible operational tasks (tagging, alerting, status updates) can be handled autonomously. Medium-stakes decisions like investment alignment drift or budget trajectory warnings should be AI-recommended but human-approved. High-stakes decisions involving funded programme cancellation or capital reallocation should always remain with human authority.

At minimum: OKR linkage at demand intake, governed baseline management that separates approved variance from execution variance, and structured benefit commitment records tied to investment approvals. Without these, AI inference generates confident outputs without reliable data to base them on

Agent washing is the practice of relabeling existing automation, rule-based triggers, or legacy workflow logic as AI-powered decision-making. It’s common in enterprise software marketing. The way to detect it: ask a vendor to explain the inference logic behind any AI recommendation. If the answer is a rule or threshold, it’s automation. If it requires the system to synthesize patterns across data, compare trajectories, and produce a considered output, it may be genuine inference

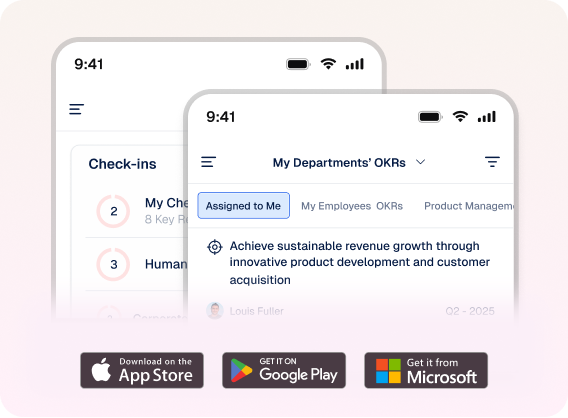

Profit.co is built on the data architecture that makes AI recommendations in PPM trustworthy: OKR-linked demand intake, governed baseline management, and structured benefits commitment tracking. The platform connects strategy to portfolio to project execution, creating the data foundation that AI inference requires to produce reliable signals rather than sophisticated noise

Profit.co is built from the strategy layer down, connecting OKR-linked investment intake, governed baselines, and benefits tracking into a data architecture that makes AI portfolio inference trustworthy

Related Articles

-

Scaled Agile Framework (SAFe) Runs Your Delivery. Who Owns Your Strategy?

Karthick Nethaji Kaleeswaran Director of Products | Strategy Consultant Published Date: April 1, 2026 TL;DR Scaled Agile Framework (SAFe) governs... Read more

-

The Hidden Cost of Zombie Projects in Your New Product Development Pipeline

TL;DR Zombie projects are New Product Development initiatives that were approved but never properly resourced. They remain active in the... Read more

-

IT Portfolio Rationalization: The Investment Decision Your ITSM Tool Cannot Make

Karthick Nethaji Kaleeswaran Director of Products | Strategy Consultant Published Date: March 31, 2026 TL;DR ITSM tells you what your... Read more

-

Project Portfolio Management Maturity: Where Does Your Organization Sit?

TL;DR Project portfolio management maturity is not binary. It develops across five levels, moving from reactive project tracking to predictive... Read more