TL;DR

- Strategic alignment scoring at intake creates a perverse incentive: the higher the weight placed on it, the stronger the motivation to fake it.

- Four gaming patterns show up in almost every intake process after the first planning cycle: objective stretching, keyword alignment, retrospective rationalization, and priority stacking.

- Governance rules can’t stop these patterns because they govern behavior without verifying claims.

- AI-driven validation introduces three mechanisms that do verify claims: strategy suggestion at submission, deviation workflow triggers, and independent alignment scoring during prioritization.

- None of this works unless your strategic objectives are specific and measurable. Vague OKRs produce vague AI scores.

It’s the fourth Wednesday of the quarter. The portfolio committee is reviewing intake submissions. Twelve project requests are on the table. Ten of them are linked to the same strategic objective: “Accelerate Digital Transformation.” Among them: a legacy ERP upgrade, a mobile app refresh, a cybersecurity compliance project, a CRM implementation that the CRO informally approved six weeks ago, and a workflow automation initiative that one team had been planning since last fiscal year.

Every single one has been scored “high” on strategic alignment. The PMO lead knows at least three of these would have been submitted regardless of the strategic framework. The intake form for the CRM project? Completed after the fact. The committee has 90 minutes to make funding recommendations across all twelve.

This is not a broken governance process. Surprised? Confused? Everything is working exactly as designed. That’s the problem.

Why Do People Game the System?

Gaming the alignment process is not a people problem. It’s a design problem.

When strategic alignment becomes a weighted criterion in portfolio prioritization, which is absolutely the right thing to do, you simultaneously create a high-value signal that every rational requestor will try to maximize. The higher the weight, the stronger the incentive.

Three things make this almost inevitable:

1. Funding pressure is real. A department that doesn’t make it into the approved portfolio doesn’t get funded. Strategic alignment scoring is often the difference between an initiative moving forward and being deferred for 18 months. That’s a powerful motivator.

2. The intake form is a blank canvas. Most intake systems ask requestors to select a strategic objective from a dropdown. No system-generated suggestion. No friction. No reference point. The requester controls the selection entirely.

3. PMOs don’t have the bandwidth to audit everything. A team managing 40 to 60 submissions per cycle cannot deep-dive every alignment claim. A well-written narrative that mirrors the language of the strategic framework is effectively indistinguishable from a genuine alignment claim in a manual review.

“We do not know how far beyond human-level intelligence we can go, but we are about to find out.”

The Four Patterns That Show Up Every Cycle

After the first planning cycle, requestors learn the system. By the second and third, gaming is in full swing. Here’s what it looks like.

| Gaming Pattern | What It Looks Like | Why Governance Can’t Catch It |

|---|---|---|

| Objective Stretching | A legacy ERP upgrade is submitted as supporting “Digital Transformation,” technically defensible, strategically weak | The objective is broad enough to absorb the claim. No mechanism tests the strength of the connection. |

| Keyword Alignment | The narrative mirrors the strategic objective almost verbatim, but the deliverable has no real causal link to it | PMO reviewers rarely have the domain knowledge to audit every submission for narrative authenticity. |

| Retrospective Rationalization | Project is pre-decided by leadership; intake form is completed after the fact to satisfy governance | By the time the PMO sees it, the budget is already committed. Rejection creates political friction, not governance integrity. |

| Priority Stacking | A single initiative is linked to four separate strategic objectives to maximize weighted score across all dimensions | Multi-objective linking is often permitted by design. Gaming the weighting model through volume is structurally allowed. |

Here’s the uncomfortable part: none of these patterns requires bad intent. A department head genuinely may believe their ERP upgrade supports digital transformation. Retrospective rationalization often happens because the sponsor believes the project is right, regardless of process. The system has no mechanism to tell the difference between genuine alignment and asserted alignment.

Why Adding More Governance Rules Doesn’t Fix This

The instinctive response to gaming is more governance: stricter review stages, more sign-offs, better submission guidelines. These are reasonable moves. They don’t solve the problem.

Adding a requirement to justify alignment in writing doesn’t produce accurate alignment claims. It produces accurate-sounding alignment claims. A requester motivated to game the system will write a better justification, not a more honest one.

There’s also a false precision problem. A score of 8.2 feels more definitive than “this seems strategically relevant.” But when the alignment claims feeding that model haven’t been validated, the precision is cosmetic. The committee is making funding decisions on a number that’s only as reliable as the self-reported inputs that produced it.

What AI Validation Actually Does

Rule-based governance governs behavior without verifying claims. AI-driven validation does the opposite: it verifies claims at a scale and consistency no human review panel can match.

Three mechanisms work together to change the dynamic.

| AI Mechanism | What It Does | Problem It Solves |

|---|---|---|

| Strategy Suggestion at Intake | AI analyzes the request details and suggests the most relevant objectives before the requester makes a selection | Eliminates the blank-canvas problem. Any deviation from the suggestion now requires an explanation. |

| Deviation Workflow Trigger | When the requester’s selection diverges significantly from the AI suggestion, the system flags it and can trigger a mandatory justification or review | Creates an audit trail for anomalous alignments. Shifts the burden of proof to the requester. |

| Independent AI Alignment Scoring | During portfolio review, the system generates its own alignment score and shows it alongside the requester’s self-reported score | Separates the claimed alignment from the substantiated alignment, side by side, visible to the governance committee. |

A submission in which the requester self-scores a 9 out of 10 on strategic alignment and the AI generates a 4 warrants scrutiny before funding approval, regardless of how polished the narrative is.

One Thing That Makes or Breaks AI Scoring

The AI alignment score is only as good as the strategic outcome it’s comparing against.

If your objective is “Strengthen Digital Capability,” almost any technology project can claim to be aligned. The AI score will reflect that ambiguity.

But if that objective has been deconstructed into a specific, measurable outcome, say, “migrate 70% of customer-facing transactions to digital self-service channels by Q4,” the AI has a precise comparison point. The score becomes genuinely discriminating.

This is the upstream dependency. Vague OKRs produce vague scores. Specific OKRs produce governance-grade signals.

Is Your Intake System Producing Governance?

Three signals consistently indicate gaming is active:

- Intake submissions cluster on two or three objectives regardless of how diverse the actual project pipeline is. If 80% of submissions link to “Digital Transformation,” that’s strategic camouflage, not strategic alignment.

- The distribution of alignment scores is implausibly uniform. If 70% of submissions score “high” alignment, the model is validating, not differentiating.

- PMO reviewers can’t explain why one high-scoring request is more aligned than another. If the score can’t be explained, it can’t be governed.

The Anatomy of a Gamed Intake System vs. AI-Validated Intake

| Stage | Without AI (Gamed System) | With AI Validation (Controlled System) |

|---|---|---|

| 1. Intake Submission | Intake form submitted | Intake form submitted |

| 2. Objective Selection | Requestor selects any objective → No system reference point | AI suggests relevant objectives first |

| 3. Alignment Framing | Narrative written to mirror strategic language → No authenticity check | Requestor selects objective. Deviations flagged with mandatory justification |

| 4. Scoring | Self-scored alignment (e.g., 9/10) → No independent validation | AI generates an independent alignment score during prioritization |

| 5. Review Process | Portfolio committee reviews claimed score → Cannot distinguish real vs. inflated alignment | The committee sees the claimed score vs. AI Score side by side |

| 6. Decision Outcome | Funding approved based on unverified alignment data | Funding decisions based on substantiated alignment |

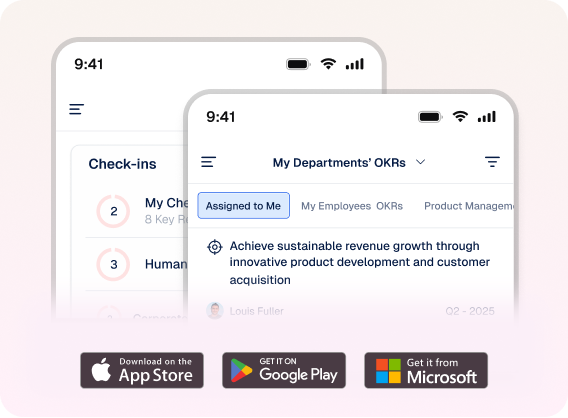

Profit.co’s Strategic PPM platform connects your OKR framework directly to the project intake

Profit.co’s Strategic PPM platform connects your OKR framework directly to the project intake process. It generates AI-driven alignment suggestions at submission, triggers deviation workflows for anomalous claims, and presents claims vs. AI-scored alignment side by side during portfolio review.

Your governance committee deserves to make funding decisions on substantiated alignment — not asserted alignment.

In the first cycle, requestors are still learning the system. Alignment claims tend to be reasonably accurate because no one has yet mapped the optimization opportunities. By the second and third cycles, the patterns are established: which objectives carry the most weight, which thresholds are easiest to clear, and which narratives pass without challenge. Gaming is a learned behavior, and it accelerates as the portfolio process matures

No. The AI score is a governance input, not a governance decision. Its purpose is to surface divergences so the committee can apply judgment to the cases that warrant it. A committee that acts automatically on AI scores is not exercising governance; it’s outsourcing it.

It will be wrong sometimes. The deviation workflow is designed precisely for this: it creates a formal channel for the requester to make their case, and it ensures that the case is documented and visible to the committee. A well-structured justification from a requester whose project was incorrectly flagged is a stronger governance signal than a high self-reported score with no explanation

Partially. The AI can still identify whether a project’s deliverables are semantically related to a broad objective. But the score will have less discriminating power. The highest-value first step is objective deconstruction: converting each objective into two to four specific, measurable outcomes. AI alignment scoring produces governance-grade signals once those are in place.