ABSTRACT

The government-citizen relationship is conducted through service interactions — applications, renewals, inquiries, complaints, benefit deliveries, and the countless moments in which a citizen’s life intersects with government programs. These interactions constitute citizen profit: the net value generated for citizens by every contact with government, measured not by what government spends but by what citizens experience. When citizen profit is high, interactions feel effortless, respectful, and effective; eligible citizens access the benefits they need; government trust rises; and compliance with government programs improves. When citizen profit is low, interactions are burdensome, confusing, and dehumanizing; eligible citizens are screened out by administrative complexity; government trust erodes; and the downstream costs — in program underutilization, repeat contacts, complaints, and democratic disengagement — dwarf the cost of the service improvements that would have prevented them.

This article presents the complete citizen profit measurement and improvement framework: six CX measurement tools with their strengths, limitations, and sample OKR Key Results; a six-stage citizen journey map covering Awareness through Ongoing Management; agency-specific OKR examples for SSA, DMV, City Permitting, VA, and IRS; seven service design principles with metrics for each; six digital equity gaps that government CX programs must address; and a guide to Executive Order 14058 compliance for federal agencies. Throughout, the article situates citizen profit not as a customer service nicety but as a core dimension of government mission — one that affects program effectiveness, equity outcomes, democratic trust, and the ultimate return on public investment.

- 23% Federal Services Rate as ‘Easy’ Forrester U.S. Federal CX Index 2023 — consistently below commercial sector

- $180B Cost of Avoidable Government Contacts annual cost of unnecessary phone/in-person interactions in U.S. government

- 6 CX Measurement Tools CSAT, NPS, CES, ACSI, RTDF, Mystery Shopper

- 26 HISPs Designated Under EO 14058 High Impact Service Providers with mandatory CX accountability

1. Why Citizen Profit Is a Mission Issue, Not a Service Issue

The case for treating government-citizen interaction quality as a core performance metric — not a secondary concern.

In 2023, the Forrester Research U.S. Federal Customer Experience Index ranked government agencies at the bottom of every sector measured — below airlines, cable companies, and health insurers. Only 23% of citizens rated their interactions with federal agencies as ‘easy.’ The average federal agency scored below the lowest-ranked private sector industry in ease, effectiveness, and emotional quality of the service experience.

These numbers are not merely embarrassing. They represent a genuine mission failure with measurable consequences. When the SNAP benefits application takes three hours to complete and requires documents that 40% of eligible households do not have, eligible families go without food assistance — not because they are ineligible, but because the service experience screened them out. When a veteran’s disability claim takes 122 days to process, that veteran spends four months in financial uncertainty that worsens the mental health condition the claim was filed to address. When a small business owner cannot navigate the permit system, economic opportunity is lost — sometimes permanently.

Administrative burden — the time, money, and psychological cost that citizens bear in interacting with government programs — is not a neutral feature of government. It is a form of policy. Programs with high administrative burden systematically exclude the most vulnerable citizens: those with the least time, the fewest resources, the lowest digital literacy, and the greatest distance from the English-language bureaucratic competencies that government processes assume. Reducing administrative burden is not a customer service initiative; it is an equity intervention.

Citizen profit — the net value generated for citizens per government interaction — captures this reality in measurable form. A citizen profit framework asks not only whether government programs are efficiently administered but whether citizens actually receive the value those programs were designed to deliver. It measures what citizens experience, not just what government does. And it creates accountability for the gap between program design and program experience that administrative burden produces.

2. The CX Measurement Toolkit: Six Tools, Their Trade-offs, and Their OKR Applications

A practical guide to selecting, deploying, and interpreting the six core citizen experience measurement instruments — and translating each into OKR Key Results.

Government agencies face a measurement paradox in citizen experience: the satisfaction surveys that produce the most comprehensive data are the ones citizens are least likely to complete, and the interactions that generate the most feedback are often the most extreme — either exceptional or deeply frustrating. A rigorous CX measurement program uses multiple complementary instruments to triangulate the citizen experience across different dimensions and interaction types.

The six tools below represent the complete citizen experience measurement toolkit. Each addresses a different dimension of the experience, uses a different collection mechanism, and produces a different type of actionable insight. A comprehensive citizen profit measurement program will use all six — weighted differently depending on the agency’s service mix and measurement maturity.

| Measurement Tool | Strengths | Limitations | Collection Method | Sample KR |

|---|---|---|---|---|

| Customer Satisfaction Score (CSAT) After a specific interaction (transaction, call, visit), ask: “How satisfied were you with this interaction?” on a 1–5 or 1–10 scale. Report the % rating 4–5 (or 8–10). |

Simple; high response rate; directly tied to a specific interaction; easy to trend over time; actionable at the transaction level | Measures satisfaction with the interaction, not the outcome; subject to recency bias; does not capture underlying drivers of satisfaction | Post-transaction digital survey; IVR prompt after call center; kiosk at service location exit | CSAT ≥ 85% for online benefit enrollment interactions; Phone service CSAT maintained ≥ 80% |

| Net Promoter Score (NPS) Ask: “How likely are you to recommend [agency/service] to a friend or colleague?” 0–10 scale. NPS = % Promoters (9–10) minus % Detractors (0–6). Score ranges from -100 to +100. |

Single-question simplicity; high correlation with loyalty and advocacy behaviors; enables benchmarking across agencies; well-understood by senior leadership | Less actionable than CSAT without follow-up questions; can be gamed; government context (no choice of provider) reduces the literal meaning of ‘recommend’ | Annual or semi-annual relationship survey; triggered after major service milestone | Agency NPS increased from -12 to +24 by Q4; Passport NPS ≥ +35 |

| Customer Effort Score (CES) Ask: “How easy was it to [complete your transaction / resolve your issue] today?” 1–7 scale (Very Difficult to Very Easy). Report mean score or % rating 6–7. |

Strongest predictor of service abandonment and complaint behavior; directly actionable — high effort = specific process to fix; uniquely powerful for government where ease is a key equity issue | Less familiar to senior leadership than NPS; requires follow-up to identify which step created the friction; newer methodology with less benchmark data | Post-transaction survey immediately after completion; ideal for digital self-service journeys | Benefits application CES ≥ 6.2/7.0 by Q3; Permit application CES improved from 3.8 to 5.5 |

| American Customer Satisfaction Index (ACSI) Standardized 100-point composite index measured through structured interview methodology. The federal government’s official citizen satisfaction benchmark, administered through the ACSI Federal Government Report annually. |

Enables apples-to-apples comparison across agencies and with private sector; longitudinal trend data back to 1994; used in GPRA-M performance plans; independently administered | Annual cadence too slow for operational management; survey methodology is standardized (less customizable); external administration creates dependency | Annual independent survey administered by ACSI; results published by fiscal year | ACSI score ≥ 72 by FY26 (from 68 baseline); ACSI score above sector average for agency type |

| Real-Time Digital Feedback (RTDF) Continuous, in-session feedback collection on digital platforms: thumbs up/down, 1-click satisfaction, open-text comment on specific pages or transactions. Captured passively during the digital journey. |

Near-zero survey fatigue (1–2 click response); captures reaction at the moment of experience; enables page-level and step-level diagnosis; high volume enables statistical confidence quickly | Selection bias (most engaged or most frustrated users respond); digital-only (misses non-digital interactions); requires analytics infrastructure to interpret | Embedded in web/mobile application; Profit.co API integration for KR auto-update | Digital service page satisfaction ≥ 78% positive; Benefits portal RTDF score maintained above 4.1/5.0 |

| Mystery Shopper / Observational Study Trained evaluators pose as citizens and complete service transactions, rating each step of the experience against a standardized rubric covering accuracy, timeliness, courtesy, and process clarity. |

Objective; captures the experience of users who would not complete a survey; reveals process failures invisible to survey data; enables consistent cross-location comparison | Resource-intensive; small sample size limits statistical inference; does not capture variation across staff or time-of-day patterns | Quarterly or semi-annual; can be contracted to CX research firms or conducted with internal evaluators | Mystery shopper overall score ≥ 4.2/5.0 across all service locations; 100% of locations audited annually |

Figure 1: Six Citizen Experience Measurement Tools — strengths, limitations, collection methods, and sample OKR Key Results

2.1 Building a Multi-Instrument Measurement Architecture

The most effective citizen profit measurement programs combine instruments across three time scales: transactional (CSAT, CES, RTDF — collected immediately after each interaction); relationship (NPS, ACSI — collected periodically to assess the overall relationship); and observational (Mystery Shopper — conducted on a schedule to audit the objective experience independently of citizen self-report). Together, these three time scales provide a complete picture: what citizens feel in the moment, how they feel about the overall relationship, and what a trained observer finds when examining the service objectively.

Profit.co’s citizen experience module integrates all three time scales into a unified dashboard, with each data stream feeding the appropriate Key Results automatically. CSAT scores from post-transaction digital surveys update KRs within hours. NPS scores from quarterly relationship surveys update KRs when survey results are available. Mystery shopper assessments are manually entered by the program coordinator. The AI Progress Agent monitors all three streams simultaneously and flags when a transactional drop in CSAT predicts a future decline in the relationship NPS — enabling proactive intervention before the damage to the relationship becomes visible in the slower measurement.

3. The Six-Stage Citizen Journey Map

A universal journey framework for government service interactions — from Awareness through Ongoing Management — with diagnostic questions, metrics, and design interventions for each stage.

Journey mapping — the structured analysis of the citizen experience through each stage of a service interaction — is the most powerful diagnostic tool in the citizen profit toolkit. It shifts the design perspective from the agency’s internal process to the citizen’s lived experience, revealing friction points, confusion moments, and abandonment triggers that internal process analysis cannot see.

The six-stage framework below applies to virtually every government service interaction, from benefit applications to permit processing to tax filing. The stages are not always sequential (a citizen may move between stages non-linearly) and not all services have every stage, but the framework provides a comprehensive analytical structure for identifying where citizen profit is being created — and where it is being destroyed.

| Stage | Citizen Experience | Key Questions for Journey Analysis | Citizen Profit Metrics | Design Interventions |

|---|---|---|---|---|

| AWARENESS | The citizen becomes aware of the need for a government service or their eligibility for a benefit. |

|

|

Proactive eligibility screening at all touchpoints; multilingual outreach; partnering with trusted community organizations as referral sources |

| INFORMATION SEEKING | The citizen seeks to understand what the service is, whether they qualify, and what they need to do to apply. |

|

|

Plain language rewriting of all eligibility and application information; FAQ optimization based on actual call center inquiry analysis; chatbot for common information requests |

| APPLICATION / ENROLLMENT | The citizen completes the required application, enrollment, or transaction to access the service. |

|

|

Application pre-fill using existing government data; real-time validation to catch errors before submission; save-and-return functionality; plain-language error messages |

| PROCESSING & DECISION | The government processes the application and makes an eligibility or approval determination. |

|

|

Proactive status notifications at each processing milestone; case status self-service portal; processing time targets with public accountability; appeal pattern analysis to correct systematic errors |

| BENEFIT DELIVERY / SERVICE ACCESS | The citizen receives the benefit, service, or permission they applied for. |

|

|

Proactive delivery confirmation; utilization monitoring with outreach to low-utilization recipients; channel choice expansion; simplify benefit access steps post-award |

| ONGOING MANAGEMENT | The citizen manages their ongoing relationship with the service: renewals, updates, queries, and appeals. |

|

|

Proactive renewal reminders 90, 60, and 30 days before expiration; change of circumstance self-service; complaint tracking with resolution accountability; appeals process clarity |

Figure 2: Six-Stage Citizen Journey Map — stages, citizen experience, diagnostic questions, metrics, and design interventions for each

4. Citizen Profit OKRs: Agency-Specific Examples

Five complete OKR templates for the most citizen-interaction-intensive government agency types — from SSA to city permitting — demonstrating how CX metrics become management accountability.

Citizen profit OKRs must be specific enough to be owned by a named program manager, measurable enough to be tracked with real data, and ambitious enough to represent genuine improvement rather than incremental adjustment. The examples below are designed to be immediately adaptable by agencies at each service type, with Key Results drawn from the measurement toolkit and journey map frameworks in the preceding sections.

| Agency | Objective | Sample Key Results |

|---|---|---|

| Social Security Administration | Make every Social Security interaction feel simple, certain, and dignified |

|

| Department of Motor Vehicles (State) | Eliminate the DMV stigma: become the government agency residents cite as a model of service quality |

|

| City Permitting Department | Make the permit experience competitive with the best online service platforms — not just better than last year |

|

| VA Benefits Administration | Ensure every veteran receives the benefits they earned, with the dignity and speed they deserve |

|

| IRS / State Tax Agency | Make tax compliance simple, fair, and respectful of every taxpayer’s time |

|

Figure 3: Citizen Profit OKR Examples — five agency types with Objectives and Key Results grounded in CX measurement methodology

5. Seven Service Design Principles for Government

The evidence-based design principles that separate government services citizens love from those they endure — with measurement approaches for each.

Government service design improvements do not happen by accident — they result from the deliberate application of citizen-centered design principles that prioritize the citizen’s experience over the agency’s administrative convenience. The seven principles below represent the distilled wisdom of the government service design movement, grounded in two decades of research and practice from the UK’s Government Digital Service, the U.S. Digital Service, 18F, and comparable national digital transformation programs.

| # | Principle | The Imperative | Measurement Approach |

|---|---|---|---|

| 1 | Start With Needs, Not Assumptions | Conduct user research — interviews, observation, and usability testing — with actual citizens before designing or redesigning any service. The assumptions built into existing government service designs are frequently wrong, and rarely tested. | Usability testing participants before any major digital service redesign; % of redesign decisions supported by user research evidence; user research sessions conducted per quarter |

| 2 | Design for the Hardest Cases First | If your service works well for the most vulnerable, most digitally disadvantaged, and most linguistically isolated citizens, it will work well for everyone. The converse is not true. Services designed for the average user systematically exclude the most vulnerable. | Accessibility audit compliance score; % of user research participants from priority equity groups; service completion rate for LEP populations relative to English-speaking population |

| 3 | Minimize Burden at Every Step | Every form field, required document, in-person visit, phone call, and step in the process is a burden the citizen bears. The default should be to eliminate or automate every burden that does not serve a genuine policy purpose. Ask for nothing twice that government already has. | Application field count (fewer = better); number of required documents (trend toward reduction); step count in application process; data pre-fill rate (% of fields automatically populated from existing government records) |

| 4 | Make Status Transparent and Proactive | Citizens should never have to call or visit to find out what happened to their application. Status should be visible, real-time, and proactively communicated at each stage transition. The volume of ‘where is my application’ contacts is a proxy for the failure of this principle. | Avoidable contact rate (% of contacts that are status inquiries — target: <10%); % of applicants receiving proactive status update at each processing stage; case status self-service adoption rate |

| 5 | Resolve Problems at First Contact | Every unresolved contact becomes a repeat contact. First Contact Resolution (FCR) — the % of citizen contacts fully resolved without requiring a follow-up — is among the strongest predictors of overall service satisfaction and the most direct driver of contact center cost. | First Contact Resolution rate (% of contacts fully resolved at first interaction); Repeat Contact Rate (% of contacts that are follow-ups to a prior unresolved issue); Agent empowerment score (% of agents with authority to resolve common issues without escalation) |

| 6 | Close Every Feedback Loop | Collecting citizen feedback without acting on it and communicating the action taken is worse than not collecting it. Citizens who provided feedback and saw nothing change are more dissatisfied than those who were never asked. Every feedback collection must have a closed-loop response protocol. | % of complaints resolved within SLA (target: ≥90% within 10 business days); % of feedback submissions receiving acknowledgment within 2 business days; ‘You Said, We Did’ communications issued per quarter |

| 7 | Train for Empathy, Not Just Procedure | The single factor most predictive of citizen satisfaction in service interactions is whether the citizen felt heard, respected, and treated as an individual rather than a case number. Technical procedural accuracy is necessary but insufficient. Every citizen-facing staff member must be trained in active listening and empathetic communication. | Mystery shopper empathy scores; employee training completion rate for citizen experience curriculum; % of negative survey comments citing staff attitude vs. process issues (process issues are fixable; attitude issues require different interventions) |

Figure 4: Seven Service Design Principles — the design imperative, why it matters, and how to measure compliance for each principle

5.1 The Administrative Burden Reduction Imperative

Of the seven principles, minimizing administrative burden (Principle 3) has the greatest equity implications and the largest gap between current government practice and achievable best practice. OMB’s 2022 Burden Reduction Report identified that federal agencies impose over 11.5 billion hours of administrative burden on the public annually — the equivalent of every American adult spending more than 40 hours per year on government paperwork.

Executive Order 14058 on Transforming Federal Customer Experience explicitly addresses this burden through the Paperwork Reduction Act process, requiring agencies to justify every piece of information they collect from the public and to eliminate collection that does not meet a genuine program need. The most powerful administrative burden reduction interventions involve using data the government already has — from the IRS, SSA, DHS, and Census Bureau — to pre-populate application forms, reducing the documentation burden on citizens who are already in government systems.

6. Digital Equity: The Six Gaps Every CX Program Must Address

The structural inequities in digital government service delivery — and the mitigation strategies that prevent digital transformation from becoming digital exclusion.

The shift to digital-first government service delivery is among the most consequential transformation trends in public administration. Digital services are faster, cheaper to deliver, and more scalable than in-person or telephone services. But they create serious equity risks: the populations who most need government services are often those least able to access them digitally. A digital-first strategy without intentional equity design becomes a digital exclusion strategy.

The six gaps below represent the most significant equity risks in digital government service delivery. Each has measurable characteristics, specific at-risk populations, and proven mitigation strategies. Agencies that take digital equity seriously — that measure access gaps, set OKRs for closing them, and hold program managers accountable for equity outcomes alongside efficiency metrics — can capture the efficiency benefits of digital transformation without sacrificing the equity commitments that public service requires.

| Equity Gap | Why It Matters for Citizen Profit | Mitigation Strategies |

|---|---|---|

| Internet Access Gap | As of 2023, approximately 21 million Americans lack broadband access — disproportionately rural, elderly, low-income, and minority populations. Digital-first service strategies systematically disadvantage these populations without intentional mitigation. | Maintain fully functional in-person and telephone service channels alongside digital; publish channel usage data disaggregated by ZIP code to identify digital access deserts; partner with public libraries and community centers as digital access points |

| Device & Digital Literacy Gap | Even among households with internet access, many citizens lack the devices, skills, or confidence to navigate complex online government service portals. Designing for the average internet user excludes a substantial minority. | Plain language; large touch targets; screen reader compatibility (WCAG 2.1 AA minimum); video tutorials; live chat support with human escalation; offer digital assistance at in-person locations |

| Language Access Gap | Federal agencies are required under Executive Order 13166 to provide meaningful access to LEP (Limited English Proficiency) individuals. But most government digital services fail this requirement in practice. | Translate all primary digital services into the top languages spoken by the served population; use certified translation (not machine-only); provide telephone interpretation services at all call centers (most can be contracted at minimal per-minute cost) |

| Disability Access Gap | Section 508 of the Rehabilitation Act requires federal digital services to be accessible to individuals with disabilities. Most government websites fail to meet this standard — creating legal exposure and excluding millions of potential users. | Conduct Section 508 / WCAG 2.1 AA accessibility audit of all digital touchpoints annually; remediate all critical accessibility failures within 90 days; include users with disabilities in user research panels |

| Trust Gap | Among populations with historical trauma from government interactions — including immigrant communities, formerly incarcerated individuals, and communities subjected to discriminatory enforcement — low trust in government creates a barrier to service access that technical improvements cannot address. | Community navigator programs embedded in trusted organizations; community-based outreach and enrollment; service design that minimizes surveillance feel; privacy-first data practices; explicit data use transparency |

| Eligibility Complexity Gap | Complex eligibility rules, extensive documentation requirements, and confusing application processes systematically screen out the most vulnerable citizens — those who most need the services — in favor of more administratively capable applicants. | Benefit eligibility pre-screening tools; application data pre-fill from existing government records; document upload alternatives; administrative burden reduction (executive order compliance); ‘no wrong door’ referral networks across benefit programs |

Figure 5: Six Digital Equity Gaps — why each matters for citizen profit and mitigation strategies

7. Executive Order 14058: The Federal Compliance Framework

What Executive Order 14058 on Transforming Federal Customer Experience requires — and how to operationalize compliance through OKRs.

Executive Order 14058, signed in December 2021, represents the most ambitious federal commitment to government customer experience in U.S. history. It designates 26 High Impact Service Providers (HISPs), requires customer experience improvement plans across the federal government, connects service quality to the President’s Management Agenda, and establishes a direct accountability path from agency-level CX performance to OMB and the White House. For federal agency leaders, EO 14058 compliance is not optional — and the most effective path to compliance is OKR-based accountability.

| EO 14058 Requirement | What It Requires in Practice | Measurement Standard | OKR Application |

|---|---|---|---|

| Customer Experience Designations | Identify High-Impact Service Providers (HISPs) — the federal programs with the greatest volume of citizen interaction — and designate them for enhanced CX accountability. Current HISPs include SSA, IRS, VA, USCIS, and 22 others. | HISPs must publish annual CX Action Plans with specific improvement commitments | Confirm whether your agency is designated as a HISP or serves a HISP’s clients; if HISP, publish CX Action Plan in Profit.co as formal OKR commitment |

| Life Experience Focus | Agencies must map the citizen journey across major life experiences (having a child, losing a job, facing a disaster, starting a small business) that require interaction with multiple federal programs — and improve coordination across agency touchpoints. | Cross-agency journey mapping; identification of hand-off friction points; joint OKRs across agencies serving common life experiences | Map your agency’s role in 2–3 major life experiences; identify cross-agency coordination gaps; build joint OKRs with partner agencies for at least one life experience |

| Digital Service Standards | Agencies must comply with the 21st Century Integrated Digital Experience Act (IDEA Act) and associated OMB guidance requiring modern, mobile-first digital services, plain language, accessibility, and consistent design patterns (USWDS). | Digital service assessments; accessibility audits; USWDS adoption tracking; mobile usability testing | WCAG 2.1 AA compliance rate ≥ 95% by Q4; USWDS design system adoption for all new digital services; mobile usability score ≥ 85% |

| Feedback Collection & Reporting | Agencies must collect and publicly report citizen feedback data, including satisfaction scores, complaints, and service quality metrics. OMB Circular A-11 Section 280 provides the standardized feedback collection methodology. | Quarterly public reporting of A-11 feedback data; standardized survey methodology; data published to Performance.gov | Configure Profit.co to auto-generate A-11 compliant quarterly CX reports; publish all satisfaction data on Performance.gov and agency website |

| Equitable Service Delivery | EO 14058 explicitly requires agencies to identify and eliminate barriers to equitable service delivery for underserved communities, including barriers related to language, disability, digital access, and eligibility complexity. | Equity assessments of service delivery barriers; disaggregated performance data; targeted outreach programs; administrative burden reduction | Publish disaggregated satisfaction data by population segment quarterly; conduct annual equity assessment of top 3 services by volume; track eligibility awareness rates by demographic group |

| Employee Experience Connection | EO 14058 recognizes the proven connection between employee experience and citizen experience: agencies cannot deliver excellent citizen service with disengaged, burned-out, or under-resourced employees. | Employee satisfaction surveys; frontline staff voice in service redesign; workload management; empowerment to resolve issues at first contact | Employee engagement score ≥ 4.0/5.0 for citizen-facing staff; % of frontline staff reporting they have the tools and authority to serve citizens well ≥ 75% |

Figure 6: Executive Order 14058 Requirements — what each requires in practice, measurement standard, and OKR application

7.1 The A-11 Section 280 Reporting Standard

OMB Circular A-11 Section 280 establishes the standardized methodology for collecting and publicly reporting federal agency customer experience data. The standard requires agencies to collect feedback using standardized survey items (covering ease, effectiveness, and trust dimensions), report results quarterly to Performance.gov, and publish results disaggregated by service channel. Profit.co’s government platform is configured to generate A-11 Section 280 compliant reports directly from the integrated CX data dashboard — eliminating the separate reporting process that many agencies currently maintain.

The most important provision of the A-11 standard for OKR practitioners is the requirement to report on the ‘actions taken in response to customer feedback.’ This closed-loop requirement — documenting not just what feedback was received but what was done about it — maps directly to the OKR accountability framework. Agencies that set CX improvement OKRs and report quarterly on their progress against them automatically satisfy the spirit of the A-11 response requirement, because the OKR reporting documents exactly what actions were taken and what results they produced.

8. Building Your Citizen Profit Dashboard in Profit.co

A practical guide to configuring Profit.co as the operational hub for citizen profit measurement and management.

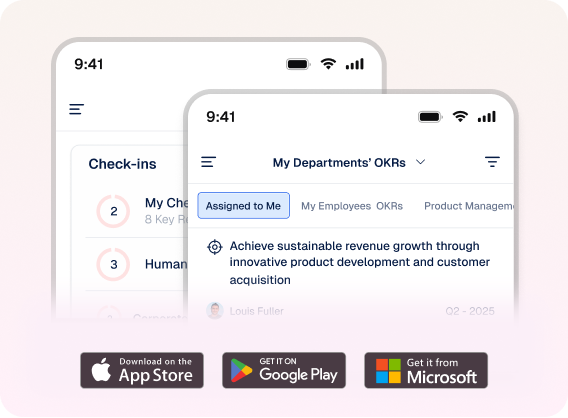

- Step 1: Connect your satisfaction data sources: Configure API integrations for digital feedback tools (post-transaction CSAT, RTDF) that feed KR progress scores automatically. For ACSI data, configure annual manual upload. For mystery shopper results, establish a quarterly upload schedule with a designated data owner.

- Step 2: Configure the journey stage dashboard: Create a custom dashboard view organized by the six journey stages, with the relevant metrics for each stage displayed as real-time KR progress bars. This gives service design teams and program managers a stage-by-stage diagnostic view of where the citizen journey is performing and where it is failing.

- Step 3: Set your channel equity metrics: Configure disaggregated reporting by service channel (digital, telephone, in-person) and by population segment (language group, geography, income proxy where available). The AI Progress Agent monitors channel equity automatically and flags when digital efficiency gains are coming at the cost of equity.

- Step 4: Build the EO 14058 compliance dashboard (federal agencies): Map your HISP requirements to specific OKRs and KRs. Configure the A-11 reporting template in Profit.co to auto-generate from platform data quarterly. Connect the Performance.gov submission workflow.

- Step 5: Establish the monthly CX review cadence: Add citizen profit metrics to your monthly leadership meeting agenda — alongside financial and mission outcome metrics — so that service quality receives the same management attention as program outputs. Profit.co’s meeting management feature supports structured CX review agendas with pre-populated data.

- Step 6: Publish your citizen profit commitments: Make your CX OKRs public — on your agency website, in your HISP CX Action Plan, and in your Annual Performance Report. Public commitment creates accountability that internal management processes alone cannot produce, and signals to citizens that service quality is a leadership priority, not just a staff function.

9. Conclusion: Government That Works for People

The vision of government that is easy to access, fast to respond, clear to understand, and respectful of the people it serves is not a utopian aspiration — it is an achievable operational standard that the best government service organizations in the world already demonstrate. The UK Government Digital Service’s ‘Government as a Platform’ model, Estonia’s X-Road digital infrastructure, Singapore’s Singpass unified identity system, and the U.S. Digital Service’s portfolio of transformed federal interactions all demonstrate that government services can be as usable, as efficient, and as citizen-centered as the best commercial services — when there is the will, the investment, and the accountability structure to make it happen.

Citizen profit measurement — the systematic tracking of what citizens experience across every interaction with government — is the accountability structure that makes this aspiration sustainable. Without it, service quality is managed by complaint volume and media coverage: reactive, crisis-driven, and systematically biased toward the most vocal and most connected citizens rather than the most vulnerable and most dependent. With it, service quality becomes a management discipline with named owners, quantified targets, regular review cadences, and public accountability — exactly the conditions under which improvement is possible.

The agencies that lead on citizen profit will not just score better on satisfaction surveys. They will achieve higher program participation rates among eligible populations, lower administrative costs through reduced repeat contacts and complaint handling, stronger democratic trust that makes program implementation easier, and the reputational position that attracts the talent needed to sustain continuous improvement. Citizen profit is the dimension of mission that citizens experience most directly — and the dimension most in need of the measurement and accountability discipline that OKRs can provide.