TL;DR

Government agencies are increasingly adopting the OKR framework to improve strategy execution and accountability. However, success depends less on writing goals and more on how the system is managed.

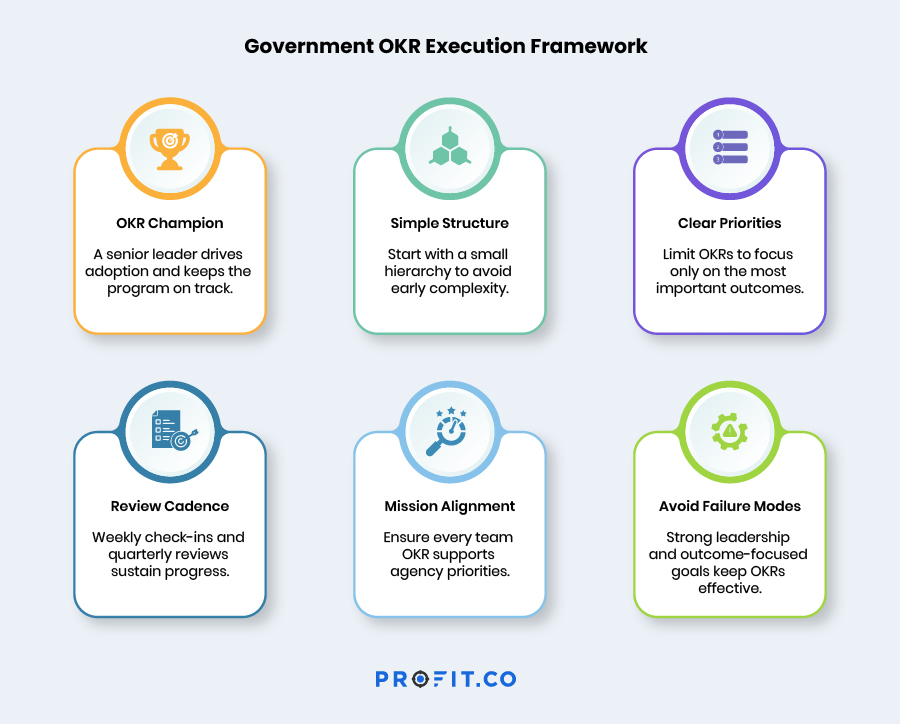

Effective government OKR programs share several core practices: appointing a strong OKR Champion, limiting hierarchy complexity, enforcing prioritization discipline, and maintaining a consistent review cadence. Weekly check-ins, monthly reviews, and quarterly resets keep goals connected to operational decisions.

Agencies must also avoid common failure modes such as leadership disengagement, initiative creep, siloed objectives, and OKR proliferation. With the right governance model and execution discipline, OKRs can become a powerful management system that connects mission priorities to everyday work.

Many government agencies are exploring the Objectives and Key Results (OKR) framework to improve strategy execution and accountability. The appeal is clear. OKRs create transparency, align teams around outcomes, and bring focus to the work that matters most.

But setting OKRs is only the beginning. The real challenge is running the system well over time.

A government OKR program can easily become another reporting mechanism if the organization does not build the right operating discipline around it. Teams may create too many objectives. Leadership attention may drift. Check-ins may become irregular. Over time, the framework loses its power.

The agencies that succeed with OKRs approach them differently. They treat OKRs as a management system rather than a planning exercise. From studying successful public-sector implementations, several patterns recur. These include appointing a strong OKR champion, limiting the complexity of the hierarchy, maintaining strict prioritization discipline, and establishing a clear review cadence. Together, these practices keep OKRs alive throughout the year and connected to real operational decisions.

The Critical Role of the OKR Champion

Every successful government OKR implementation has a clearly designated OKR Champion. This role is usually held by a senior leader, often at the Director or Deputy Director level. The individual must have enough authority and credibility to work across departments and guide the organization through change.

The Champion is not simply an administrator who manages the platform. They act as the culture carrier for the OKR program. Their responsibilities include:

- Modeling strong OKR behavior

- Coaching colleagues as they learn the framework

- Resolving conflicts between competing objectives

- Maintaining momentum when resistance appears

Introducing OKRs often changes how teams think about goals and accountability. Some resistance is natural, especially in large government organizations with established processes. The OKR Champion helps the organization navigate this transition. For this reason, the role should be formally designated with protected time.

In the early phases of implementation, successful agencies allocate:

- 20 to 30 percent of a senior leader’s time during the first two phases

- Around 10 percent of their time once the culture becomes established

When the champion role is treated as an afterthought and assigned to someone already at full capacity, the program usually struggles. Momentum slows, alignment issues remain unresolved, and the system gradually fades into the background.

See How Government Agencies Run OKRs Successfully

Right-Sizing the OKR Hierarchy

Another common challenge in government OKR implementation is creating too many hierarchy levels too quickly. Large federal agencies often attempt to mirror their entire organizational structure within the OKR system, including levels such as Secretary, Deputy Secretary, Assistant Secretary, Program Office, Division, Team, and Individual. While this approach seems logical, it quickly becomes unmanageable. Leaders spend more time managing the structure than discussing outcomes. A better approach is to start small and expand gradually.

During the first year, most successful agencies limit their OKR hierarchy to three levels.

| Level | Purpose |

|---|---|

| Agency or Department | Defines mission level priorities |

| Program or Division | Translates strategy into program outcomes |

| Individual | Connects daily work to strategic objectives |

Additional levels can be introduced later once the organization becomes comfortable with the framework. Tools like the Profit.co alignment tree enable gradual expansion of the hierarchy without disrupting the existing structure.

Tools like the Profit.co alignment tree enable gradual expansion of the hierarchy without disrupting the existing structure

Quantity Discipline

One of the most common OKR quality problems in government is too many objectives. Teams often attempt to capture every priority, initiative, and mandate inside the OKR system. This can result in departments with 15 or even 20 objectives, each containing several Key Results. When this happens, prioritization disappears. The weekly check-in process becomes burdensome, and the framework loses its focus.

The discipline of OKRs is fundamentally about choosing what matters most. In practice, departments should limit themselves to the following ranges.

| Element | Recommended Range |

|---|---|

| Objectives per department | 3 to 5 |

| Key Results per objective | 2 to 5 |

If a department cannot fit its priorities within these limits, it usually means the organization has not yet completed the prioritization conversation. That conversation is not a limitation of OKRs. It is one of the framework’s greatest strengths.

The Prioritization Test

There is a simple question that helps teams clarify their real priorities. Imagine that your team could work on only three things this quarter. What would they be? Those are your Objectives.

Everything else falls into one of three categories:

- KPIs that should continue to be monitored

- Ongoing operational responsibilities

- Priorities that may belong in a future quarter

OKRs are not meant to track everything an agency does. Their purpose is to focus attention on the few outcomes that matter most right now.

The Government OKR Execution Framework rests on six interconnected practices:

The OKR Management Cadence

Setting OKRs is only the first step. What keeps them effective throughout the year is the management cadence that surrounds them. An organization that sets goals in October and reviews them the following September lacks a management system. It has a compliance exercise.

The real strength of OKRs comes from the rhythm of check-ins, reviews, and resets that connect strategy to everyday execution. A well-run government OKR program typically operates across four time horizons.

| Cadence | Audience | Purpose and Agenda | Platform Support |

|---|---|---|---|

| Weekly | Individual and Team | Update Key Result progress, flag blockers, track momentum | Automated check-in reminders and AI summaries |

| Monthly | Department or Program | Review progress dashboards, address at-risk results, adjust resources | Live dashboards with AI-generated insights |

| Quarterly | Agency Leadership | Review results, conduct retrospective, define next cycle priorities | Performance summaries and OKR planning |

| Annual | Executive Leadership and Oversight | Strategic review, performance reporting, budget justification | Mission impact dashboards and reporting |

This cadence ensures that strategic goals remain connected to operational decision-making.

The Weekly Check-In

The weekly check-in is the foundation of the entire OKR system. It is also the practice most frequently skipped. When teams stop updating progress each week, several problems quickly appear: progress data becomes outdated, risks are not surfaced early, and accountability gradually weakens.

A typical check-in is simple and takes only five to ten minutes per Key Result. Teams update the progress percentage, note any blockers, and add a brief comment explaining the change. Modern OKR platforms simplify this process. Automated reminders prompt updates, and AI-generated summaries prepare management-ready insights for the next review meeting. This reduces reporting effort while keeping leaders informed about progress and risks.

The Quarterly Review and Reset

If the weekly check-in keeps the system running, the quarterly review is where strategy evolves. This meeting performs three important functions at the same time.

- First, it reviews the progress made during the previous quarter.

- Second, it evaluates how that progress contributed to the agency’s mission outcomes.

- Third, it defines the priorities for the next cycle.

The process usually begins by scoring each Key Result on a scale from 0.0 to 1.0. Leaders then review the results in context. The key question is simple: did the organization make meaningful progress toward the Objective? From there, the group decides how each OKR should evolve. Some Key Results continue into the next quarter. Others are redesigned. In some cases, the entire objective changes because new policies, budgets, or leadership priorities have emerged.

OKR Scoring: The 0.0 to 1.0 Scale

A consistent scoring model helps organizations evaluate progress objectively.

| Score | Rating | What It Means |

|---|---|---|

| 0.0 to 0.3 | Below Expectations | Significant obstacles prevented progress |

| 0.4 to 0.6 | Partial Progress | Progress occurred but the target was not achieved |

| 0.7 to 0.9 | Strong Progress | Ambitious target largely achieved |

| 1.0 | Full Achievement | Target achieved completely |

Government organizations often have a natural instinct to aim for 1.0 on every objective. This reflects a culture built around compliance and full obligation rates. The philosophy behind OKRs is different. A consistent 0.7 to 0.9 score usually indicates strong performance, because it shows that teams set ambitious goals and pushed toward them. On the other hand, a pattern of constant 1.0 scores may indicate that targets were too conservative.

A Common Pitfall: OKR Washing

One of the most damaging behaviors in an OKR program is what practitioners sometimes call OKR washing. This occurs when teams set conservative targets simply to guarantee a perfect score. While this behavior may satisfy reporting expectations, it removes the ambition that makes OKRs valuable.

A healthier culture celebrates ambitious progress. Leaders should recognize strong 0.7 scores and question comfortable 1.0 outcomes. The key question is simple: what would it have taken to reach a 0.9 here? If the answer reveals that a much harder target was possible, the next cycle should aim higher.

4 Common Failure Modes in Government OKR Programs and How to Avoid Them

Even well-designed OKR programs can struggle if certain patterns begin to appear. Over time, several failure modes tend to repeat across government organizations adopting the framework. The good news is that these issues are predictable. Once leaders understand them, they can put simple safeguards in place to keep the system healthy.

1. Leadership Abandonment

The most damaging failure occurs when senior leadership publicly endorses OKRs but stops practicing them. In the early months of an implementation, leaders often speak enthusiastically about the framework. But if they later stop attending review meetings, stop referencing OKRs in leadership discussions, or stop linking resource decisions to OKR progress, the message to the organization quickly changes. Teams begin to interpret the framework as a compliance requirement rather than a management tool. Most OKR programs can survive this pattern for a short period. After one or two quarters, however, participation declines and the system gradually becomes paperwork rather than a driver of performance.

Countermeasure: The OKR Champion should maintain a direct line of communication with the agency head and have clear authority to escalate leadership disengagement when it appears. Leadership OKR reviews should be treated as protected commitments on the executive calendar. In addition, the agency head’s own OKRs should remain visible to the entire organization within the platform. This transparency demonstrates accountability from the top and reinforces that the framework applies to everyone.

2. Initiative Creep

Another common issue appears when teams shift their focus away from outcomes and toward activities. Organizations that are new to OKRs often find it easier to list the work they plan to do rather than the results they expect to achieve. As a result, Key Results slowly turn into task lists or activity trackers. When this happens, the OKR system begins to resemble a project management tool. Teams report that they completed their initiatives, yet the underlying mission outcomes remain unchanged. Over time, the connection between OKRs and mission impact weakens.

Countermeasure: Regular quality reviews help prevent this drift. Tools such as Profit.co’s AI Quality Agent can automatically flag Key Results that appear to measure activities rather than outcomes. In addition, OKR Champions should conduct periodic audits using a simple test: if we completed this Key Result but the broader mission objective was still not achieved, would we consider the result successful? If the answer is yes, the Key Result is likely measuring activity instead of impact and should be rewritten.

3. Siloed OKRs

Another risk emerges when departments create OKRs independently without aligning them to agency priorities or to each other. In these situations, teams often optimize their own objectives successfully. Individual departments may report strong scores and visible progress. Yet the organization as a whole may still struggle to advance its most important mission goals. It is entirely possible for two departments to each achieve a 0.9 score on their respective OKRs while a critical cross-agency initiative remains underfunded or unaddressed.

Countermeasure: Alignment reviews should occur before OKRs are finalized. Using tools such as the Profit.co alignment tree, leaders can visualize how objectives connect across departments. During these reviews, department heads examine each other’s proposed OKRs and identify dependencies or overlaps. When coordination is necessary, teams can create shared Key Results aligned with cross-cutting objectives. This ensures that collaboration becomes part of the measurement system rather than an informal expectation.

4. OKR Proliferation

As OKR programs mature, organizations often begin adding more of everything. New objectives appear. Additional Key Results are introduced. More layers are added to the hierarchy. Reporting requirements gradually expand. While each change may seem reasonable in isolation, the combined effect can be overwhelming. Within a few years, the system becomes more complicated and burdensome than the processes it originally replaced.

Countermeasure: The best defense is disciplined simplicity. Organizations should maintain strict limits: no department should have more than five Objectives, no Objective should contain more than five Key Results, and new hierarchy levels should only be added when another layer is removed. The OKR Champion plays an important role here. Their responsibility is not only to help the system grow but also to actively prune complexity as it accumulates.

Conclusion: The Government OKR Opportunity

When adapted thoughtfully, the OKR framework offers government agencies a powerful way to close the gap between strategy and execution. It introduces a shared language for accountability, a clear structure for alignment, and a management cadence that connects strategic priorities to everyday work.

The agencies that succeed with OKRs tend to share several characteristics. Leadership remains actively involved. An empowered OKR Champion guides the process. Teams prioritize ruthlessly. And the platform supporting the system makes the mechanics simple rather than burdensome.

Technology plays an important role here. Platforms designed specifically for government strategy execution can automate check-ins, visualize alignment, and generate performance insights that make the system easier to manage. But tools alone do not create success. The real transformation happens when leaders are willing to set ambitious goals and hold themselves accountable for achieving them. That willingness is what ultimately distinguishes the highest performing government organizations.

Explore how the Profit.co platform helps agencies align strategy, automate check-ins, and track mission outcomes

OKRs help government organizations translate mission goals into measurable outcomes. They improve transparency, align teams across departments, and create accountability for strategic priorities.

Most successful implementations appoint a senior leader as an OKR Champion, typically at the Director or Deputy Director level, responsible for guiding adoption and maintaining momentum

Departments should typically maintain 3–5 objectives, with 2–5 key results per objective. Limiting the number ensures focus and prevents the system from becoming overly complex

A typical OKR cadence includes:

- Weekly check-ins for progress updates

- Monthly reviews at the department level

- Quarterly strategic reviews with leadership

- Annual reviews for long-term mission performance

The most frequent failure occurs when leadership stops actively participating. When leaders stop referencing OKRs in decisions and reviews, teams quickly view the system as a reporting exercise rather than a management framework.

Related Articles

-

Knowledge Resource Management in Enterprise Projects

Karthick Nethaji Kaleeswaran Director of Products | Strategy Consultant Published Date: March 23, 2026 TL;DR Knowledge is one of the... Read more

-

Beyond Headcount: The Complete Guide to Resource Management in Enterprise Project Portfolio Management

Karthick Nethaji Kaleeswaran Director of Products | Strategy Consultant Published Date: March 23, 2026 TL;DR Most project resource plans focus... Read more

-

Financial Resource Planning in Project Portfolios

Karthick Nethaji Kaleeswaran Director of Products | Strategy Consultant Published Date: March 23, 2026 TL;DR Every project has a budget... Read more

-

Human Resource Management in Enterprise Project Portfolio Management

Karthick Nethaji Kaleeswaran Director of Products | Strategy Consultant Published Date: March 23, 2026 TL;DR Most PMOs manage human resources... Read more